There has been a definite increase in the number of reports on webmaster forums, including the Google ones, and many of the reports involve the popular WordPress plugin Wordfence.

There has been a definite increase in the number of reports on webmaster forums, including the Google ones, and many of the reports involve the popular WordPress plugin Wordfence.

Google has never published a list of IPs they crawl from, as those IPs can change at any time. They also have bots used for specific products as well, such as the ones for Google AdSense and Google AdWords.

One of the issues is Google the Local-aware crawling by Googlebot, which not only come from completely new IPs, but also from countries and IPs based outside of the US, which seems to be triggering false positives in bot blocking scripts. If you are unsure if the Googlebot visiting is a real one or not, you can do a reverse DNS lookup to confirm.

Why do people block bots? A variety of reasons – to block server load, to block attacks, to prevent fake referrals. Generally users will whitelist with a list of known Googlebot IPs, as many people will spoof Googlebot, but when Google switches up the IPs and a user inadvertently blocks Googlebot, it can take quite some time to rectify this situation, as noted in this thread from WebmasterWorld.

I have a system that prevents ‘bots from crawling my site. It has a whitelist, to which I add Google IPs. I had always added them manually because new IPs didn’t come up too often, and I wanted to make sure that no one was spoofing Google. About 10 days ago, Google apparently switched to crawling from about a dozen new IPs. I was not paying close attention to my system and those IPs got blocked. They were blocked for about 3 or 4 days.

…

The traffic picked up a little bit, but slowly. Google wasn’t adding the pages back even though they had recrawled them. Some pages came back, but some of my top pages (for example, Connor McDavid) were nowhere to be found in Google – even when I searched with my site’s name (as many users do). I tried asking Google to recrawl multiple times, but after a week they still aren’t adding back pages for which I request a recrawl.

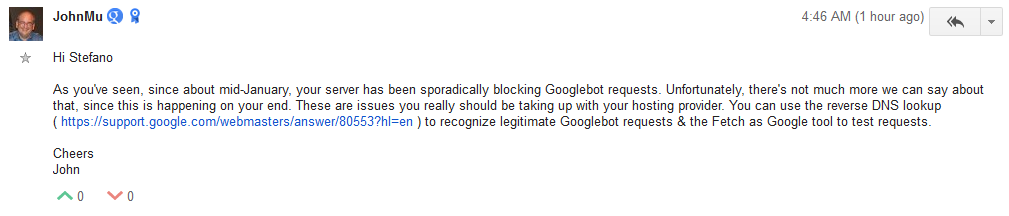

John Mueller also commented today on the Google Webmaster Help forums with the same situation, where a site is blocking Googlebot.

Wordfence, a popular WordPress plugin for blocking bots, is one that repeatedly comes up, with both the free and paid versions having issues. (Added: Wordfence has some documentation on the specific Googlebot feature so webmasters can ensure they don’t accidentally block Google.)

Wordfence, a popular WordPress plugin for blocking bots, is one that repeatedly comes up, with both the free and paid versions having issues. (Added: Wordfence has some documentation on the specific Googlebot feature so webmasters can ensure they don’t accidentally block Google.)

Hosting companies can also block Googlebot to save server resources. Many, many years ago, GoDaddy hosting blocked Googlebot from crawling all the sites they were hosting for their hosting clients.

Bottom line, if you are using any kind of bot blocking script, you will want to check Google Webmaster Tools daily (if not more than once a day) to check on any issues with Googlebot being blocked.

Jennifer Slegg

Latest posts by Jennifer Slegg (see all)

- 2022 Update for Google Quality Rater Guidelines – Big YMYL Updates - August 1, 2022

- Google Quality Rater Guidelines: The Low Quality 2021 Update - October 19, 2021

- Rethinking Affiliate Sites With Google’s Product Review Update - April 23, 2021

- New Google Quality Rater Guidelines, Update Adds Emphasis on Needs Met - October 16, 2020

- Google Updates Experiment Statistics for Quality Raters - October 6, 2020

Mark Maunder says

Hi Jennifer,

Looks like you’re citing a support thread that speculates that Wordfence might block Googlebot as a source for your headline that claims we actually block Googlebot.

We’ve been successfully allowing verified Googlebot crawlers to crawl our customer sites while blocking the bad guys for around 4 years now without a problem. We currently have no open support tickets related to any issues with Googlebot. There is no current issue on our forums talking about this. There is merely this support thread on an external system that you’ve cited as a source.

I’ve responded to the support thread you’ve linked to and suggested they too read our documentation which explains how we verify the real googlebot, block fake googlebots and make sure your SEO stays healthy while protecting your website:

http://docs.wordfence.com/en/Wordfence_options#Verified_Google_crawlers_have_unlimited_access_to_this_site

As I mentioned on the support forum you linked to – for the truly paranoid, in Wordfence you can even allow anyone claiming to be Googlebot with a UA header to have unlimited crawl access to your site, but we don’t recommend this because we do a darn good job of verifying the real googlebot through a PTR lookup.

Regards,

Mark Maunder – Wordfence Founder/CEO.

Jennifer Slegg says

Thanks for commenting… you might also want to search the Google help forums, as there were multiple threads with Wordfence being mentioned as well.

Mark Maunder says

Not really my responsibility since it’s not my article. I’d like to see those additional sources cited by you if you think there’s a real concern here. We do a pretty good job of keeping abreast of our ticketing system, forums, twitter and other sources as to any potential issues or feedback with Wordfence. That’s how I found your article.

Mark.

Jennifer Slegg says

I was just simply making you aware of where I have seen the repeated references… it seemed to be on the uptick with the new IPs Google began using.

Mark Maunder says

So my concern is that your headline says that we’re blocking Google. That’s damaging to us unless you 100% verify this because it’s libelous. Jennifer to be clear, I’m not some suehappy a-hole CEO. But put yourself in my team and my shoes. A blogger posts an article saying that we’re blocking Googlebot. No matter who the firewall product is, that’s horrifying and I sure as heck wouldn’t use them.

I’m having this conversation with you on your block in a public forum because I genuinely am concerned. I’ve read the support forum you linked to and there’s no evidence there that we’re blocking googlebot at all.

So help me out here. Where are you seeing evidence that backs up your headline of “Be Wary of Blocking Googlebot with “Bad Bot” Blocking Software, Including Wordfence”.

Either give my team and I something we can work with – which I can assure you we’ll jump on and get fixed right away. Or retract the article and we’ll just move on.

As an analogy: I post something that says “visiting thesempost.com will give you measles.” You wouldn’t be too happy unless I had evidence it was true and of course you’d immediately fix the issue.

So again, I’m concerned on two levels: A) if it’s true we will get all over this – and you really need to provide some hard evidence that it is – or what appears to be way more likely considering we’re a the center of our own product feedback which is B) Wordfence does a great job of not blocking googlebot and being a good firewall and someone on a support forum somewhere got a little confused.

Mark.

Jennifer Slegg says

Do you have a support link on how webmasters can be certain they aren’t accidentally blocking Googlebot, since it seems to be something that is happening and might not be obvious to some users what they are doing (or would be a good thing to have on your site for reference for those worried about it, if you don’t). If you have something like that, I am happy to add it to the article so those that are using Wordfence can reassure themselves they aren’t accidentally blocking or make the changes so it can’t happen. From what I’ve been told, its people wanting to block those spoofing Googlebot but when they do so, they are blocking real Googlebots with new Google IPs. Even the best of us can change settings thinking “wow, this is cool!” without seeing the bigger picture of what might happen when we do it.

That said, Wordfence is valuable as a security plugin and I have recommended it to others who have had issues with sites being hacked, particularly when the sites deal with topics that tend to be spammed/compromised more than others. But whether it is a default setting or webmasters accidentally changing a setting that inadvertently causes it, I was simply making people aware they need to check their WordPress settings to ensure they aren’t accidentally doing it, particularly with the new IPs Google is using. But it is also likely because it is the most recommended security plugin for WordPress why the name seemed to come up again and again.

Here you go, sorry it took a bit longer, I had them saved on my laptop from when I did the original story… there are likely more on the Google forums, but their search can be a little difficult when trying to find relevancy plus newest:

Wordfence blocked Googlebot IPs: https://productforums.google.com/forum/#!searchin/webmasters/wordfence/webmasters/dnlyFpTjNzc/zN64fc_n0egJ

Another case of Wordfence blocking Googlebot: https://productforums.google.com/forum/#!searchin/webmasters/wordfence/webmasters/R4Jr_332OdI/sbqhID9mmlAJ

Lifting blocked IPs worked: https://productforums.google.com/forum/#!searchin/webmasters/wordfence/webmasters/t-XVPWwTZk0/1PjUJW0BrtoJ

Could be country blocking Googlebot: https://wordpress.org/support/topic/google-crawler-blocked-when-wordfence-firewall-is-activated

Googlebot blocked: https://wordpress.org/support/topic/google-cant-access-site-robottxt-error

Googlebot blocked with plugin activated: https://wordpress.org/support/topic/google-crawler-blocked-when-wordfence-activated

“Fake” Googlebot: https://wordpress.org/support/topic/blocking-fake-googlebot

Blocked Googlebot: https://wordpress.org/support/topic/falcon-engine-blocking-googlebot-and-msnbot

Blocked Googlebot with both Wordfence & Cloudflare used together: https://wordpress.org/support/topic/wordfence-blocking-googlebot-when-using-cloudflare

Throttled Googlebot: https://wordpress.org/support/topic/googlebot-throttled

Mark Maunder says

I should also add that we don’t rely on whitelisting static IP’s as you’ve suggested as a way to verify Google and you wouldn’t want to use any product that does.

Mark Maunder says

Thanks Jennifer. Looks like I can’t reply to your post so writing here. Taking them one at a time. Also see my comments below – in summary we’ve opened an issue to investigate some of these cases. Nothing verified but we just want to be absolutely sure.

https://productforums.google.com/forum/#!searchin/webmasters/wordfence/webmasters/dnlyFpTjNzc/zN64fc_n0egJ

User has option to block fake googlebot crawlers enabled and is emulating googlebot with their browser. Looks like leads to speculation that the real googlebot is being blocked but it’s likely something else. Here’s documentation about the option:

http://docs.wordfence.com/en/Wordfence_options#Immediately_block_fake_Google_crawlers:

https://productforums.google.com/forum/#!searchin/webmasters/wordfence/webmasters/R4Jr_332OdI/sbqhID9mmlAJ

We’ve just re-tested fetch as google ourselves and it works under all conditions – I’m wondering if they weren’t using a non webmaster tools version of fetch as google which would have been blocked if fake google crawler blocking is enabled. I’ve added this to an issue I’ve opened (see below).

https://productforums.google.com/forum/#!searchin/webmasters/wordfence/webmasters/t-XVPWwTZk0/1PjUJW0BrtoJ

Doesn’t actually say anything about wordfence blocking crawling and they seem confused by us and another vendor – seem to think we are them.

https://wordpress.org/support/topic/google-crawler-blocked-when-wordfence-firewall-is-activated

So the customer in this case seems to have dropped the issue – no reply for 2 weeks. However what’s interesting is that Wordfence actually doesn’t process the request when robots.txt is requested. It’s handled directly by your web server and we don’t even see the request because it doesn’t hit PHP or WordPress. The file is just served directly. So even if we wanted to block that request we couldn’t.

https://wordpress.org/support/topic/google-cant-access-site-robottxt-error

So as I mentioned above we don’t control access to robots.txt but I’m getting our guys to investigate this just to be sure something weird isn’t up.

https://wordpress.org/support/topic/google-crawler-blocked-when-wordfence-activated

So this one is an old threat but it’s interesting and I’ve appended it to an issue I’ve opened to investigate this. I suspect the guy who mentions garbled text may be the key here – I think he may have caching misconfigured. We’ll take a look at this one.

https://wordpress.org/support/topic/blocking-fake-googlebot

Looks like this one is a rogue crawler – the IP they post is not a googlebot IP and it may be the customer themselves accessing their own site via a google proxy.

https://wordpress.org/support/topic/falcon-engine-blocking-googlebot-and-msnbot

Looks like he created some advanced tools and inadvertently blocked googlbot himself.

https://wordpress.org/support/topic/wordfence-blocking-googlebot-when-using-cloudflare

In this case user set up cloudflare but didn’t have his site configured correctly with cloudflare so googlebot IP’s appeared to be cloudflare IP’s.

https://wordpress.org/support/topic/googlebot-throttled

Not sure what happened here – need more detail but I’ve added this to an issue to look into.

Thanks again for posting these. Some of them are obviously user error but there is some interesting stuff here. Nothing verified but I’ve opened an issue and I’m going to have one of our guys look into some of the items today just to be sure.

Thanks Jennifer for posting these, much appreciated. I’ll try to post an update if we have one.

Regards,

Mark.

Jennifer Slegg says

Thanks for responding. I think there might be a limit on replies to a comment thread because of nesting. I have added the support document to the article as well 🙂